Upload problems?

11 Jan 2007 18:45:15 UTC

Topic 192297

(moderation:

Anyone else having problems? Looks like I have been since maybe 10:30pm last nite, uploading, but not 'reporting' maybe because the scheduler is off-line?

Language

Copyright © 2024 Einstein@Home. All rights reserved.

Upload problems?

)

It seems that there is lots going on. This E@H run seems to be limping towards the finish line, but it's still making decent progress of a little more than 1% of WU per day. The end is near.

Seems to have fixed itself

)

Seems to have fixed itself right after I started this string. Maybe someone kick-started it again!

Well I have noticed scheduler

)

Well I have noticed scheduler probs, too, but they never lasted more than a few hours... I'm glad I got myself a somewhat larger cache again, though.

Part of the problem is

)

Part of the problem is probably that the current all short WU mix is hammering the server ~9x harder than the mostly long WUs were earlier in the run.

RE: Part of the problem is

)

I also think so!

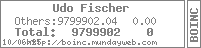

On the 'Server Status page' you can see:

'oldest unsent result': 0 d 0 h 0 m

'BOINC scheduler': Not running

The scheduler is unable to create WUs fast enough...

Udo

RE: 'BOINC scheduler': Not

)

The scheduler doesn't create the work - it just dishes it out :).

The work generator process is running so work is being created, it would seem. All the download mirror sites are running. My boxes seem to be getting work as required without too much difficulty right now. However, things could change at a moment's notice!!

Cheers,

Gary.

Does the WUs that have been

)

Does the WUs that have been sent out, but are not being crunched because the machines are in 1 week coma (some machines have 300+ waiting in the cache) have an effect on the servers/database?

My machine went into a 7 day

)

My machine went into a 7 day coma, but as soon as I saw that the project was running again, I pressed the Update button and it came out of the coma.

RE: My machine went into a

)

Install boinc 5.8 and you haven't got the problem with one week defered scheduler anymore.

Not worth the bother in my

)

Not worth the bother in my case, as I have both my boxes right here with me and have a look at BOINC from time to time anyway, so I can do just what Martin said. I don't want to trade in an app that has been running stable for months for one with a feature I don't really need (yeah, I also do server administration ^^ maybe that influences the way I run my private boxes aswell).

But, Martin, you should consider that quite a few people have five and more boxes attached, or use hosts they can only administer at certain times or via remote. In that case, the new feature must be a blessing.